If someone had asked you ten years ago, “What is the definition of technology?” you might have answered without much thought: computers, smartphones, machines, maybe the internet. Simple enough.

Today, that same question feels heavier.

Is technology just hardware and software—or does it include the systems behind how we work, communicate, learn, and even think? Is a spreadsheet technology? Is a process? Is artificial intelligence a tool, a partner, or something else entirely?

These aren’t abstract questions anymore. They affect how businesses invest, how students prepare for the future, how governments regulate innovation, and how individuals decide what to trust, adopt, or avoid.

This article is written for:

- Curious beginners who want a clear, grounded explanation

- Professionals who use “technology” daily but want deeper clarity

- Business owners, educators, creators, and decision-makers

- Anyone who feels technology is shaping their life faster than they can define it

By the end, you won’t just know what the definition of technology is—you’ll understand how it evolved, why it matters, how it’s used in the real world, and how to think about it critically and confidently going forward.

No buzzwords. No shallow explanations. Just a practical, human understanding of one of the most powerful forces shaping modern life.

What Is the Definition of Technology? A Clear, Practical Explanation

At its most fundamental level, the definition of technology is:

Technology is the application of knowledge, tools, techniques, and systems to solve problems, achieve goals, or extend human capabilities.

That sentence may sound straightforward, but it contains layers worth unpacking.

Technology is not limited to digital tools. A hammer is technology. So is writing. So is agriculture, plumbing, and timekeeping. Technology exists wherever humans intentionally use knowledge to create solutions.

Think of it this way:

- Science asks why things happen

- Technology asks how we can use that understanding

A smartphone didn’t appear because someone wanted a gadget—it emerged from centuries of accumulated knowledge in physics, materials science, engineering, design, and human behavior.

Another helpful way to understand technology is to see it as a bridge:

- Between ideas and execution

- Between limitations and possibilities

- Between human intention and real-world impact

Importantly, technology includes:

- Physical tools (machines, devices, infrastructure)

- Digital tools (software, platforms, algorithms)

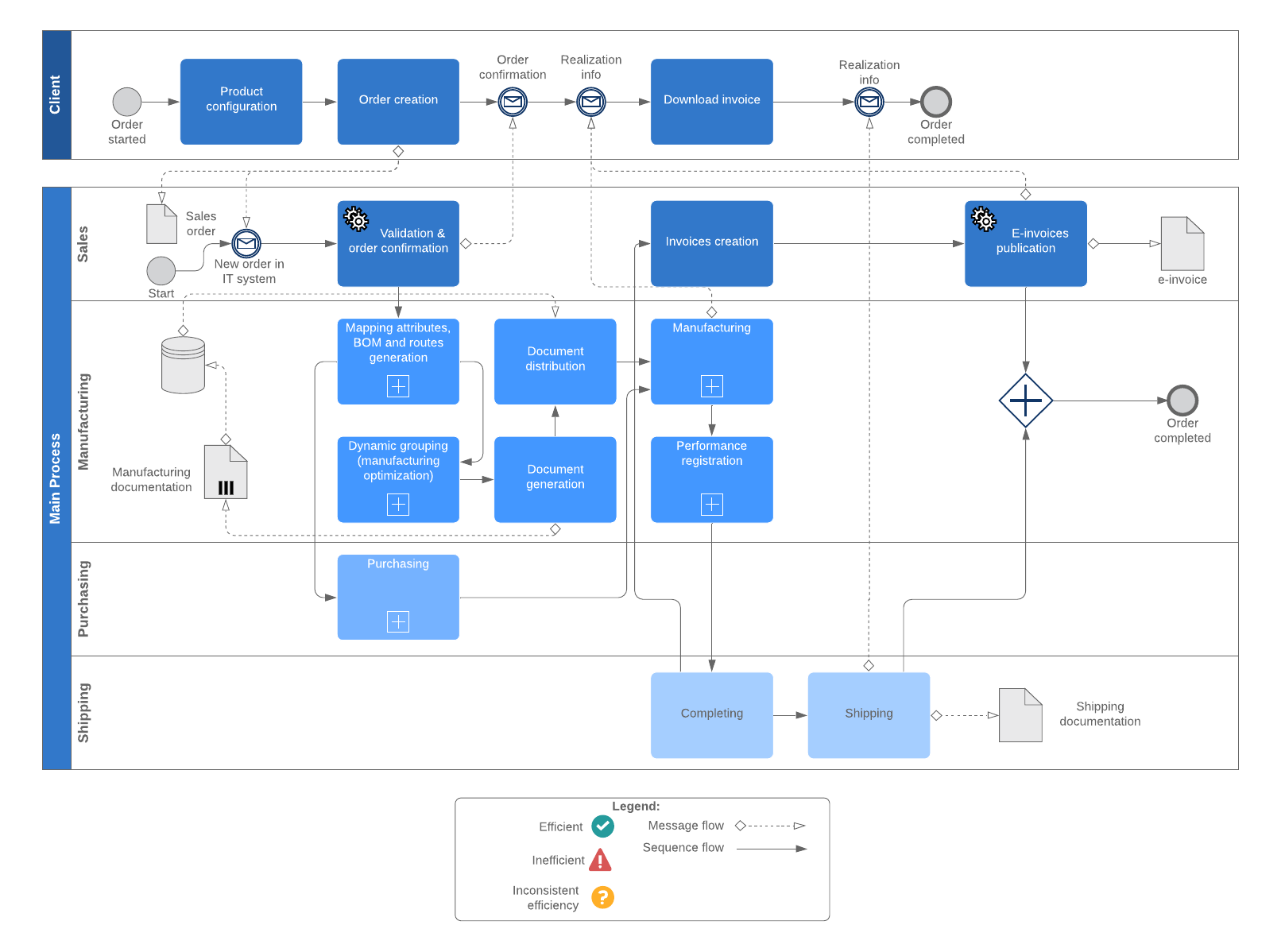

- Processes (methods, workflows, systems)

- Knowledge applied in repeatable ways

That’s why a manufacturing system, a hospital workflow, or a marketing automation funnel all qualify as technology—even if no one plugs them into a wall.

The mistake many people make is equating technology solely with electronics. In reality, electronics are just the most visible layer of a much broader concept.

How the Definition of Technology Has Evolved Over Time

The meaning of technology has never been static. It shifts as human needs, capabilities, and environments change.

Early Human Technology: Survival First

The earliest technologies were simple but transformative:

- Stone tools for hunting and protection

- Fire for warmth, cooking, and safety

- Basic shelters for survival

These weren’t luxuries. They were responses to immediate, life-or-death problems.

Agricultural and Mechanical Technology

As societies stabilized, technology shifted toward:

- Farming tools and irrigation systems

- Wheels, pulleys, and levers

- Written language for record-keeping

Technology became about efficiency and scale, not just survival.

Industrial Technology

The Industrial Revolution redefined technology again:

- Steam engines and factories

- Mass production systems

- Transportation networks

Here, technology began reshaping economies, labor, and social structures—not just tools, but entire ways of living.

Digital and Information Technology

The late 20th century introduced:

- Computers and software

- Telecommunications

- The internet

Technology became faster, more abstract, and deeply interconnected. Information itself became a resource.

Today: Systems, Intelligence, and Integration

Now, technology includes:

- Artificial intelligence

- Cloud computing

- Automation and robotics

- Data-driven decision systems

The definition has expanded from tools we use to systems we live inside.

Understanding this evolution matters because it shows that technology isn’t something that suddenly “arrived.” It’s something humanity has always been building—layer by layer.

Why the Definition of Technology Matters in the Real World

You might wonder why defining technology precisely even matters. Isn’t it obvious?

In practice, unclear definitions cause real problems.

In Business

When leaders don’t understand what technology truly includes:

- They overspend on tools but ignore processes

- They buy software without changing workflows

- They mistake “digital transformation” for app adoption

Clear thinking about technology leads to better decisions, smarter investments, and sustainable growth.

In Education

Students often learn tools without understanding systems:

- How technology shapes thinking

- How tools influence outcomes

- How to adapt when tools change

A strong definition builds transferable skills, not just tool familiarity.

In Society

Public debates around privacy, AI, automation, and ethics often fail because:

- Technology is treated as neutral or inevitable

- Human responsibility is overlooked

- Social consequences are underestimated

Understanding what technology is helps us decide what it should be.

Benefits and Real-World Use Cases of Technology

Technology delivers value when it solves real problems. Let’s look at who benefits and how.

Individuals

For everyday people, technology:

- Saves time (navigation apps, automation)

- Increases access (online learning, remote work)

- Enhances communication (messaging, video calls)

Before technology: limited reach, slower processes

After technology: expanded opportunity, faster execution

Businesses

Organizations use technology to:

- Scale operations efficiently

- Analyze data for better decisions

- Automate repetitive tasks

- Improve customer experiences

The difference between struggling businesses and thriving ones is often how technology is applied—not how much is purchased.

Healthcare

Technology enables:

- Faster diagnosis

- Remote patient monitoring

- Precision treatments

- Data-driven care plans

It reduces errors, saves lives, and extends access beyond physical hospitals.

Education

Modern education relies on:

- Learning management systems

- Digital collaboration tools

- Adaptive learning platforms

Technology personalizes education instead of standardizing it.

Government and Infrastructure

Technology supports:

- Public safety systems

- Transportation networks

- Digital identity and services

When designed well, it increases transparency and efficiency.

The common thread across all these examples? Technology works best when it’s aligned with human needs—not imposed for its own sake.

A Step-by-Step Guide to Understanding and Applying Technology Effectively

Understanding the definition of technology is only useful if you can apply it wisely. Here’s a practical framework.

Step 1: Identify the Real Problem

Before adopting any technology, ask:

- What friction exists?

- Where is time, money, or effort being wasted?

- What outcome actually matters?

Technology should serve problems—not create new ones.

Step 2: Understand the System, Not Just the Tool

Every technology exists within a system:

- People

- Processes

- Constraints

- Goals

Ignoring the system leads to failed implementations.

Step 3: Choose the Simplest Effective Solution

More advanced doesn’t always mean better.

- Simple tools are easier to maintain

- Complexity increases risk

- Adoption matters more than features

Step 4: Integrate, Don’t Isolate

Technology works best when:

- It connects to existing workflows

- It reduces cognitive load

- It feels natural to use

Step 5: Measure Outcomes, Not Usage

Success isn’t:

- Logins

- Features used

- Time spent

Success is:

- Time saved

- Errors reduced

- Results improved

This mindset separates strategic technology users from constant tool-chasers.

Tools, Comparisons, and Expert Recommendations

Free vs Paid Technology Tools

Free tools are great for:

- Learning

- Small-scale experimentation

- Simple workflows

Paid tools often offer:

- Better support

- Scalability

- Security and reliability

The right choice depends on:

- Risk tolerance

- Growth plans

- Criticality of the task

Beginner vs Advanced Tools

Beginner tools prioritize:

- Ease of use

- Fast setup

- Minimal configuration

Advanced tools offer:

- Customization

- Deeper analytics

- Integration capabilities

Many people fail by jumping to advanced tools too early.

Lightweight vs Professional Systems

Lightweight tools are ideal when:

- Speed matters

- Teams are small

- Flexibility is needed

Professional systems shine when:

- Compliance is required

- Scale is non-negotiable

- Data integrity is critical

Expert advice: outgrow tools intentionally, not impulsively.

Common Mistakes People Make with Technology (and How to Fix Them)

Mistake 1: Treating Technology as a Shortcut

Technology amplifies existing habits—good or bad.

Fix: improve processes before automating them.

Mistake 2: Ignoring Human Behavior

People resist tools they don’t understand.

Fix: invest in onboarding, training, and feedback loops.

Mistake 3: Overcomplicating Solutions

More features ≠ better outcomes.

Fix: ruthlessly simplify.

Mistake 4: Confusing Trends with Strategy

Not every new technology fits every context.

Fix: align adoption with long-term goals.

Mistake 5: Forgetting Maintenance and Evolution

Technology isn’t “set and forget.”

Fix: plan for updates, reviews, and eventual replacement.

What most people miss is that technology management is ongoing work, not a one-time purchase.

The Human Side of Technology: Ethics, Responsibility, and Balance

One of the most overlooked parts of the definition of technology is responsibility.

Technology shapes:

- Attention

- Behavior

- Power dynamics

Every design decision embeds values—whether intentional or not.

Questions worth asking:

- Who benefits from this technology?

- Who might be harmed?

- What trade-offs are being made?

- Can humans override the system when needed?

Healthy technology adoption balances:

- Efficiency with empathy

- Automation with accountability

- Innovation with restraint

Technology should expand human potential—not replace judgment, creativity, or ethics.

Conclusion: Understanding Technology Is a Skill, Not Just Knowledge

So, what is the definition of technology?

It’s not just machines or software. It’s human ingenuity applied through tools, systems, and processes to shape the world intentionally.

When you understand technology this way:

- You make better decisions

- You avoid hype-driven mistakes

- You gain confidence instead of overwhelm

Technology isn’t something happening to you.

It’s something you can learn to use on purpose.

The next step is simple: observe how technology shows up in your own life or work—and ask whether it’s truly serving you.

That’s where mastery begins.

FAQs

What is the simplest definition of technology?

Technology is the practical use of knowledge to solve problems or achieve goals.

Is technology only digital?

No. Tools, processes, and systems—digital or physical—are all forms of technology.

How is technology different from science?

Science explains how things work; technology applies that knowledge in practical ways.

Can processes be considered technology?

Yes. Repeatable systems designed to achieve outcomes are technological.

Why is technology important in daily life?

It saves time, increases access, improves efficiency, and expands human capabilities.

Adrian Cole is a technology researcher and AI content specialist with more than seven years of experience studying automation, machine learning models, and digital innovation. He has worked with multiple tech startups as a consultant, helping them adopt smarter tools and build data-driven systems. Adrian writes simple, clear, and practical explanations of complex tech topics so readers can easily understand the future of AI.